Imagine standing at the edge of a vast landscape—rolling hills fading into misty mountains, trees receding into the distance, and a winding road disappearing over the horizon. Even though you’re viewing this scene with just one eye, your brain effortlessly constructs a rich, three-dimensional world. This remarkable ability comes from monocular cues for depth perception: visual signals that allow you to judge distance and spatial relationships using input from only one eye.

Unlike binocular vision, which relies on both eyes to calculate depth through subtle differences in perspective, monocular cues work in flat images, photographs, paintings, and real-world environments—even when one eye is closed or impaired. These cues are not just theoretical concepts; they’re essential tools your brain uses every day to navigate safely, drive accurately, and interpret the world around you.

In this guide, we’ll explore the seven key monocular cues—how each one works, what to look for, and why they matter in everyday life. Whether you’re an artist creating realistic scenes, a student studying psychology or neuroscience, or someone adapting to vision loss, understanding these cues can dramatically improve your spatial awareness and visual interpretation.

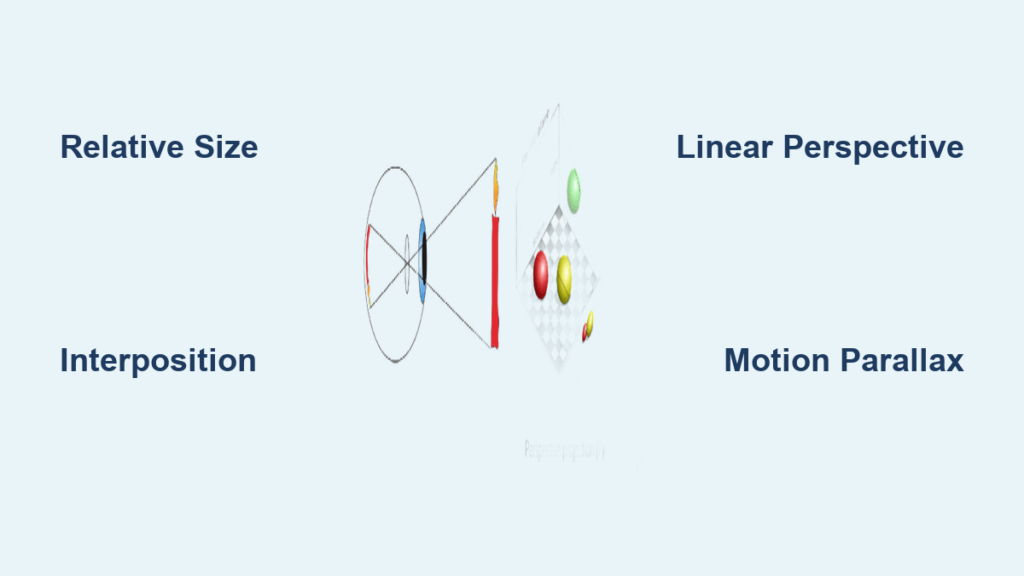

Relative Size: Bigger Objects Are Closer

Your brain assumes familiar objects maintain consistent size—so when one appears smaller, it must be farther away.

Why Size Differences Signal Distance

When two objects are known or assumed to be the same actual size, the one that projects a smaller image on your retina is interpreted as more distant. This cue depends heavily on prior knowledge: you know cars, people, and animals don’t shrink or grow suddenly, so differences in apparent size must reflect changes in distance.

Example: Two identical motorcycles on a highway—the one appearing larger is judged as closer, even if there are no other depth clues.

This cue is especially useful at medium to long distances where binocular vision becomes less effective. Drivers, pilots, and athletes constantly use relative size to estimate how far away objects are.

Common Pitfalls and Illusions

- Unfamiliar objects: If you don’t recognize the true size of something (like a drone or unknown animal), size-based judgments fail.

- Forced perspective photography: Placing small objects close to the camera makes them appear large—think of photos where someone “holds” the moon in their hand.

✅ Pro tip: Combine relative size with motion parallax (by moving your head) to confirm depth when uncertain.

Interposition (Overlap): When One Object Blocks Another

If an object covers part of another, it’s in front—no second eye needed.

How Occlusion Creates Instant Depth

Interposition, also called occlusion, is one of the most reliable monocular cues because it provides unambiguous depth ordering. When Object A partially hides Object B, your brain immediately knows A is closer.

Example: A lamppost blocking part of a building clearly appears in front—even if both are the same color and brightness.

Even minimal overlap—a sliver of one object covering another—triggers this perception. That’s why interposition works so well in flat visuals like diagrams, maps, and cartoons.

Use in Visual Design

Artists and designers use interposition to:

– Create layered compositions

– Suggest proximity without perspective lines

– Guide viewer attention through spatial hierarchy

🚫 Limitation: While interposition tells you which object is closer, it doesn’t tell you how much closer.

Linear Perspective: Parallel Lines Converge with Distance

As parallel lines extend into the distance, they appear to meet—your brain reads this as depth.

The Power of Vanishing Points

Railroad tracks, road edges, or rows of streetlights seem to converge toward a single point on the horizon—the vanishing point. The stronger the convergence, the greater the perceived depth.

Real-world example: A long hallway appears narrower as it stretches away, signaling increasing distance.

Renaissance artists like Leonardo da Vinci mastered linear perspective to turn flat canvases into lifelike windows into space. Today, architects and filmmakers use it to create immersive environments.

What to Look For

- Roads narrowing toward the horizon

- Rows of trees or columns appearing closer together

- Building outlines slanting inward with height

🎨 Design tip: Exaggerating perspective can add drama or draw focus in visual storytelling.

Aerial Perspective: Distant Objects Appear Hazy and Bluer

The atmosphere scatters light, making faraway objects look paler, blurrier, and cooler in tone.

How Air Affects Visual Clarity

Particles in the air—dust, moisture, pollution—scatter short-wavelength (blue) light more than red or yellow. This Rayleigh scattering causes distant landscapes to take on a soft blue-gray tint.

Key signs of aerial perspective:

– Lower contrast

– Softer edges

– Desaturated colors

– Blurred detailExample: Nearby trees show rich green hues and sharp leaves; distant hills appear faded and indistinct.

Fog, smog, and humidity intensify this effect, while clear days reduce it—making aerial cues context-sensitive.

🖌️ Artistic technique: Painters simulate depth by fading background colors and softening outlines, mimicking natural atmospheric effects.

Light and Shade: Shadows Reveal Shape and Position

Shading patterns tell your brain about contours, orientation, and spatial relationships.

How Highlights and Shadows Build 3D

The way light falls on surfaces reveals:

– Surface curvature (e.g., a sphere vs. a flat disk)

– Direction of the light source

– Whether parts face toward or away from you

Example: A ball lit from above has a bright top and shadowed bottom—your brain interprets this as round and elevated.

Your brain assumes light comes from above (like sunlight), so inverted lighting (e.g., under a lamp below) can make objects appear hollow or upside-down.

Cast Shadows Add Depth

When an object casts a shadow on another surface, your brain infers:

– The casting object is closer

– The receiving surface is farther

– Their relative heights and positions

⚠️ Warning: Flat lighting removes critical depth cues, making scenes look two-dimensional.

Monocular Motion Parallax: Movement Reveals Depth

When you move, nearby objects shift faster across your vision than distant ones.

How Head Movement Enhances Depth

As you move side to side:

– Nearby objects move quickly across your field of view

– Distant objects move slowly, often in the opposite direction

Example: Riding in a car, roadside poles zip past while distant mountains glide slowly.

This dynamic cue is invaluable for people with monocular vision. By swaying their heads, they simulate the depth information normally provided by two eyes.

🔁 Practical tip: Gently rock your head when judging distances with one eye—this boosts motion parallax and improves accuracy.

Texture Gradient: Detail Fades with Distance

Surfaces lose visible texture as they recede—your brain detects this gradient as depth.

From Coarse to Smooth: A Visual Clue

Up close, textures are rich and distinct—individual blades of grass, pebbles on a path, or bricks on a wall. As surfaces stretch into the distance, these details compress and blur into a smooth, uniform appearance.

Example: Grass near your feet shows clear blades; the same field 100 meters away looks like a solid green sheet.

Your brain interprets this texture compression as increasing distance: coarse = close, fine = far.

🖼️ In digital media: Game developers use texture gradients to create realistic terrain without heavy rendering.

Monocular vs. Binocular Vision: What’s the Difference?

One eye vs. two—each has strengths depending on context and distance.

| Feature | Monocular Cues | Binocular Cues |

|---|---|---|

| Eyes required | One | Two |

| Best range | Medium to long distances | Short to medium (<6 meters) |

| Relies on movement? | Yes (motion parallax) | No |

| Accuracy | Moderate, learned through experience | High, based on direct physiological input |

| Used in 2D media? | Yes (art, photos, screens) | No |

| Vulnerable to illusions? | More (context-dependent) | Less |

Key insight: Binocular cues like retinal disparity and convergence offer precise depth at close range—but monocular cues dominate in real-world navigation, driving, and viewing art.

People with monocular vision adapt by combining multiple cues—especially motion parallax, shading, and perspective—for surprisingly accurate depth judgment.

Conditions That Impair Depth Perception

Vision disorders can disrupt peripheral input and weaken depth cues.

Common Causes

- Glaucoma: Damages optic nerve, leading to tunnel vision

- Retinitis pigmentosa: Genetic loss of peripheral vision

- Stroke: Disrupts brain areas processing visual space

- Detached retina: Causes sudden vision loss

- Scotoma: Blind spots interrupting scene continuity

These conditions reduce available visual data, making depth interpretation harder—especially in low light or cluttered environments.

Adaptive Strategies

Individuals with monocular or impaired vision can:

– Use head movements to enhance motion parallax

– Focus on shadows, perspective, and texture

– Rely on memory of object sizes

– Increase visual scanning and environmental awareness

“The more cues a person uses together, the more accurate their depth perception becomes.”

Regular eye exams help detect issues early and preserve functional vision.

Real-World Applications of Monocular Cues

From art to aviation, these cues shape how we see and interact with the world.

In Art and Photography

Artists simulate depth using:

– Linear perspective for architectural realism

– Aerial perspective in landscapes

– Shading to model form

– Texture gradients to show surface recession

Photographers use shallow depth-of-field to blur backgrounds, enhancing perceived distance.

In Driving and Navigation

Drivers rely on:

– Relative size to judge vehicle distance

– Motion parallax during lane changes

– Interposition to assess who’s ahead

– Linear perspective on straight roads

Even with limited binocular input at long range, monocular cues keep drivers safe.

In Virtual Reality and Design

Game developers and UX designers apply these cues to:

– Build immersive 3D environments

– Guide user attention

– Prevent visual fatigue

Understanding monocular perception helps create intuitive interfaces—even on flat screens.

Final Takeaways

Monocular cues for depth perception transform flat retinal images into a dynamic, three-dimensional experience. The seven primary cues—relative size, interposition, linear perspective, aerial perspective, light and shade, motion parallax, and texture gradient—allow your brain to infer depth using just one eye.

While less precise than binocular vision, these cues are:

– Learned through experience

– Highly effective in real-world settings

– Critical for people with monocular vision

– Widely used in art, design, and technology

By recognizing and leveraging these cues, you can improve spatial awareness, avoid misjudgments, and better understand how vision shapes perception.

Action steps:

– Practice identifying cues in daily scenes (e.g., while walking or driving)

– Use head motion to enhance depth judgment if vision is limited

– Schedule regular eye exams to maintain visual health

Depth isn’t just seen—it’s interpreted. And with the right cues, even one eye can see the world in 3D.